Intro To Recording: Day 1

It was a very crowded room Tuesday evening in room A202 of La Corte Hall at the California State University at Dominguez Hills. I held my first meeting of the “Introduction to Audio Recording” for a packed house of 45 students (in a class that the administration capped at 25). After handling the normal chores of reading the roster and explaining the nature of the course, I launched into the first lecture. This is a class that only meets once a week so I have to pack a lot of information and keep the group somewhat entertained for almost three hours. I think last evening went pretty well. I thought I would share the nature of my first lecture online because it focused on acoustics and the relationship of the physics of sound and the world of music. We tend to focus on the post performance side of the music pipeline but it’s important to understand a little about the raw materials…the acoustic energy that comes from voices and instruments.

Acoustics is somewhat broader than just the science of sound. It deals with vibration, sound, and both infra and ultrasound. The term acoustics is derived from the Greek word “akoustikos”, which means “of or for hearing, ready to hear” but it extends both higher and lower than human hearing.

Musical instruments, voices, mechanical devices and other natural phenomenon produce oscillations or periodic motions that can excite out ears resulting in electrical impulses to our brains. I have a great computer animation on “Auditory Transduction” produced by Brandon Pietsch on the anatomy of our ears and the process of hearing. I’m not sure if it’s available online but it is very well done.

Basic acoustics deals with three primary areas. These are:

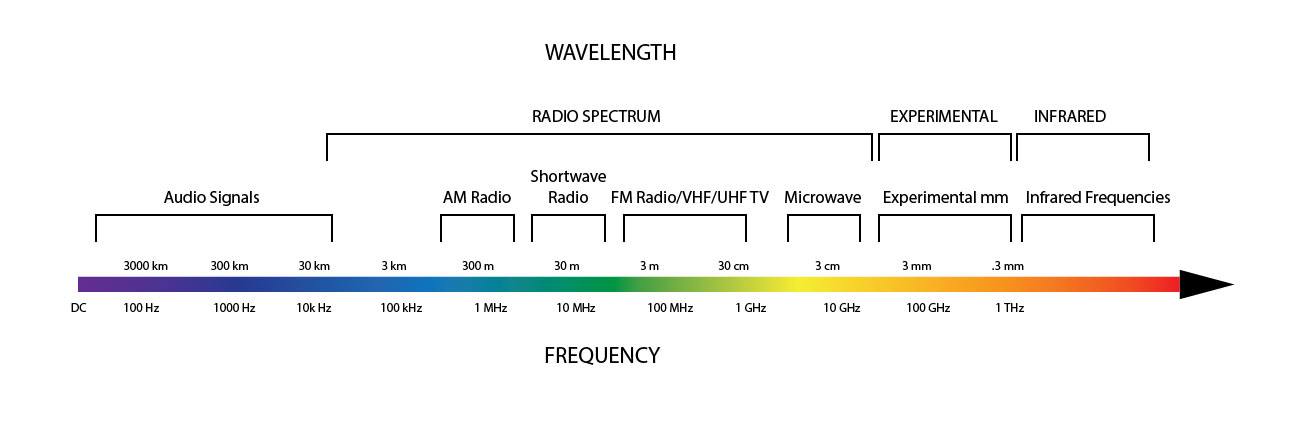

Frequency – is the rate or the number of times per second at which a signal or event happens. Frequencies are bounded at DC or direct current on the low end and extend up through the audible spectrum into infinity…and beyond! The number of oscillations or cycles per second (cps) is named after a German physicist Heinrich Hertz (1857–1894) who made important contributions to the study of electromagnetism in the 19th century. If the frequency of a signal is in the range of 20-20 kilohertz (kHz), humans hear this as sound. As the frequency increases beyond our hearing range it manifests itself in other ways…including light and radio signals (see illustration below):

Figure 1 – A graphic showing the frequency ranges from DC to infrared. [Click to enlarge]

The speed of sound at sea level and 70 degrees Fahrenheit is about 1125 feet per second.

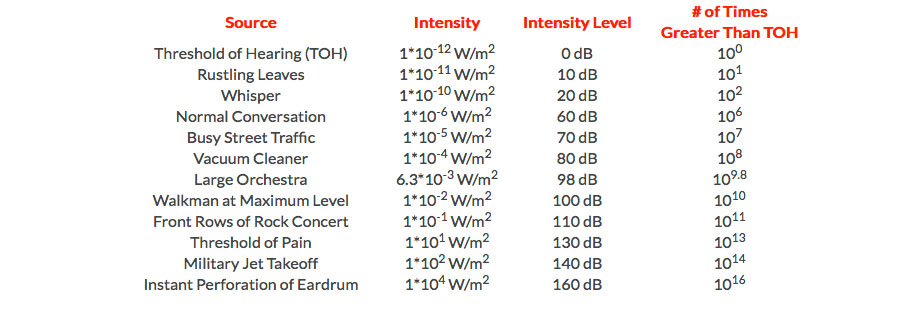

Amplitude – is the acoustics term used to describe the volume or loudness of a sound and is measured in the proportional unit a decibel. A dB expresses the relationship between two different amplitudes. When we say one sound is 3 dB louder than another sound it means the intensity of the sound pressure is twice what it was previously. The decibel scale is not linear. It is logarithmic.

The range of sound amplitudes that can be measure extends from 0 dB of sound pressure (which doesn’t exist except in anechoic chambers) to around 130 dB, which is a factor of around 100,000,000,000,000 times (100 trillion times)! Our ears are amazing instruments.

Figure 2 – The relationship between common levels of sound and their dB equivalent. [Click to enlarge]

I’ll get to the third item Harmonic Spectrum tomorrow…

Very basic physics, do you think we ( your readers) are unaware of this?

Yep, I do think there’s a lot of readers at all levels visiting this site.

Light and radio signals are electromagnetic waves, which is a completely different phenomenon from acoustic waves. You do not progress from one to the other by changing frequencies.

The graphic and discussion doesn’t speak about acoustic waves…it focuses on signals and their frequency.

While your intention to focus on signal frequency is clear, the inclusion of wavelength in the graphic is incorrect for audio/sound signals. The wavelengths are for electromagnetic radiation not sound.

Are you claiming that audio signals don’t have any wavelength? Humans can hear…20 Hz to 20 KHz. The speed of sound is around 340 meters per second. So the range of audio wavelengths is 1.7 millimeters to 17 meters.

The problem with the graphic is that it specifies a wavelength of 30 km to 300 km for the audio signal wavelengths. That’s an error of more than 7 orders of magnitude. 30 km to 300 km is correct for propagation of an electromagnetic wave in a vacuum; it’s not correct for sound wave propagation at standard temperature and pressure.

Thanks for the clarification. I would not have included the wavelengths if they weren’t part of the chart that I based my diagram on.

You wrote: If the frequency of a signal is in the range of 20-20 kilohertz (kHz), humans hear this as sound.

This should have read: If air molecules are vibrating in frequencies from 20 Hz to 20 kHz, humans hear this as sound.

There are radio waves that occupy this same frequency range (designated SLF, ULF, and VLF). We obviously don’t hear them as sound, because they are oscillations in the electromagnetic field, rather than vibrations in the air.

You wrote: As the frequency increases beyond our hearing range it manifests itself in other ways…including light and radio signals (see illustration below):

This should have read: as the frequency of vibration increases, we no longer perceive it as sound. Still, ultrasonic vibrations can exist at least up to a few hundred kilohertz in air.

Defining an absolute limit is difficult, because air is a mixture of different types of molecules. And, anyway, very high frequencies will only propagate over short distances before being absorbed.

Unless writing about electrical signals, it doesn’t make sense to define DC is a lower bound; it should simply be 0 Hz. (Of course, 0 Hz, itself, is not a frequency, as it has no period and infinite wavelength.) Writing that anything extends beyond infinity is clearly nonsensical.

Mixing electromagnetic waves and terminology relating to electrical signals into a paragraph that introduces frequency as one of the properties of acoustic signals will only confuse anyone you are trying to educate.