What the Pros Do?

It’s coming. Yes, the definition and “best practices” documents will be made public sometime around the third week of June…during the CE Week in New York. I wish I could tell that these past 6 months of discussions have produced a clear and concise document, but they haven’t. There has been progress. Consumers will get more information about the provenance of purchased tracks than they’ve ever had before, but it won’t be accurate. And there’s probably no way that it ever will be.

As many of you already know, I’m on several boards that are dealing with the emergence of high-resolution audio. There’s the CEA, the AES and the NARAS Producers and Engineers Wing. They all have a slightly different position on HRA given that their goals and focus are different. The AES deals with the hardware and science of audio and appeals to the gear heads. The P&E Wing of the Grammy organization is in touch with the producers and engineers AND artists that make commercial hit records. Finally, the CEA caters to companies that make hardware for the mass consumer. They don’t really know or care about what happens upstream from them. If it helps their members sell more hardware, they love it.

Today’s conference call was among a small group (maybe 12-15 people) of engineers. It was the P&E Wing’s second call to discuss high-resolution audio. I missed the first call because I was doing demos at the AXPONA show. But I did receive the minutes and read them with interest. Here’s a summary.

It turns out that virtually all artists, engineers, producers and label folks have no clue what high-resolution music is. And they can’t care about something that they aren’t aware of. And even if they do know what the current trends are for better delivery specifications (remember Mastered for iTunes), within their day-to-day engineering challenges they can’t concern themselves with ensuring that everything is done according to “best practices”.

Let me give you an example.

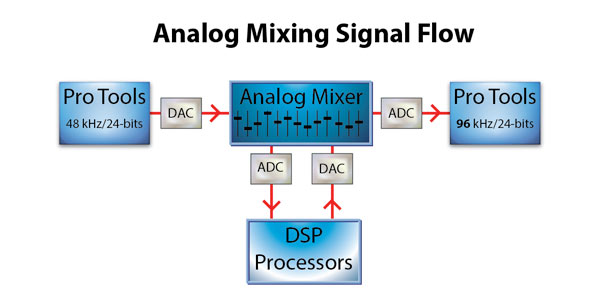

If an engineer is working on a project in Pro Tools, they routinely record at 44.1 or 48 kHz and with 24-bit words. It was mentioned on the phone call that longer words are more important than higher sampling rates (an assertion that I don’t agree with…since the dynamic range of most hit records is far less than even 10 bits). When it comes time to mixdown the multitrack to a stereo or surround mix, the same Pro Tools session is used as the mixdown machine. It used to be that a separate mixdown tape machine was used to capture the mixes. Not any more. A full-blown PT rig can cost well over $10,000 and most studios only have one. That means that when now mixes are “bounced” from a set of multitrack parts to a stereo mix, they are placed back on a new stereo pair of tracks within the same session (see diagram below).

Figure 1 – The signal flow for mixing a multitrack record in a single PT or double PT systems. [Click to enlarge]

Notice that the sample rate and word length have to be the same in a single PT system. This means that the project will likely be at 44.1 or 48 kHz. And if it is mixed to second system, what is the provenance of the resulting mixes? Are they 48 kHz or 96 kHz?

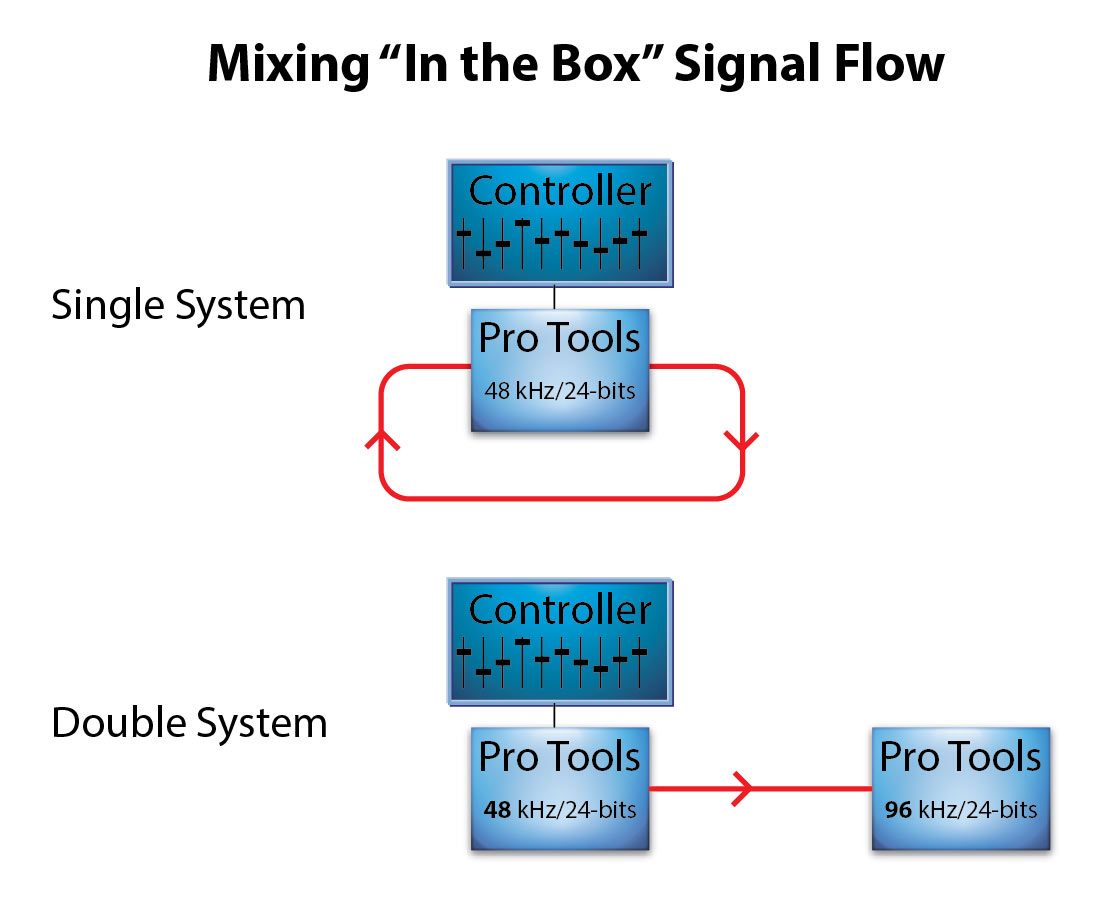

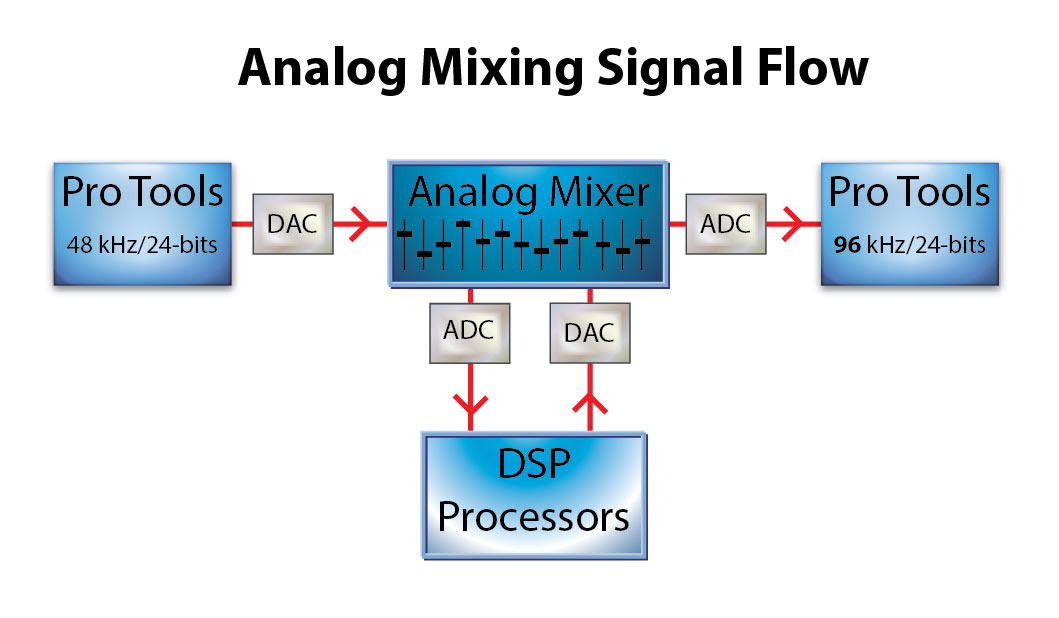

And a third option was talked about on the call. Many engineers prefer to mix through their favorite analog mixing console. That means they go through an extra set of converters. The PT rig is converted back to analog and the converted back to digital at 96 kHz. Some engineer believe that because they’re using analog signal processing and faders, the results are high-resolution. The analog stage “cures” the problems of recording at a lower sample rate or shorter word length during the original recording.

Figure 2 – Using an analog mixing desk and processing to move from one resolution to another. [Click to enlarge]

I’m not even going to talk about the plugins that say they’re running at 96 kHz and are really limited to 48 internally.

We’re in trouble.

“We’re in trouble.”

No kidding. How is this level of ignorance, willful or otherwise, even possible, Mark? Have these people never sat in front of two decent speakers and even attempted an A, B, C or D source comparison?

A corollary question comes to mind. How many millions, nay billions, of dollars in equipment out there goes half or less used – given the poor record/playback mix/source material being used?

In reality, these folks are just meeting the challenges that confront them everyday…they get files from different producers at different sample rates and word lengths. They’re just trying to keep up. I don’t blame them…the labels and artists are unaware that people care.

Okay, I get the pragmatic dilemma.

What I don’t understand, however, is the same problem is not in the video world – at least as technically egregious. There’s a 24fps 1080p or 4k standard. Artists, producers, and technicians can see the differences in recording quality.

I, for one, certainly do applaud you carrying the aural flag, Mark. I just wish it was easy as the visual one.

I can understand the exigencies of professional recording work, but it appears that re-masterings of old analog releases [both on vinyl and digital downloads] with this industry mind-set are really sort of an ‘audio haggis’ since no one seems to give a ‘s–t’ about the ingredients in the stew!

Now, I feel a lot better about carefully recorded ‘needle drops’ – al least I know what I’m hearing!!!

With some of the stuff I’ve heard, you’re giving haggis a bad name….!

You know I recorded a Celtic/Latin Fusion band called “Bad Haggis”, right?

No, do tell. Is it available at AIX?

Yes, Bad Haggis is one of the more esoteric projects that I recorded. It’s an amazing record with amplified pipes, fiddle, Latin percussion and vocals by Ruben Blades.

Mid-fi

So, without getting into all the other issues that could be raised, let’s talk about bit depth.

It’s true, for a compressed pop mix you don’t need a lot of dynamic range IN THE RELEASE FORMAT. The release format only has to capture the mix, and it is usually normalized so that whatever the bit depth is, it is fully utilized. As a consumer, I find that a 16-bit release format is usually fine.

But when you are recording, you must leave 10 dB or more of headroom on each track so that an unexpected peak doesn’t splatter and ruin the take. Then as you mix, you may find that a quiet passage needs quite a boost, or some high end added, or both. If you are working with 16-bit tracks, you are often screwed at this point, pulling the track’s dither up into audibility. Lastly, if you have 40 or 80 tracks active in a mix, all their noise is summed, adding to the mix’s noise floor. So, as a pro, I would never work with less than 24 bits.

Completely agree…I was referring to the release formats.

Looking at the diagram you’ve presented shows DSP being used in the analogue mixing stage. Surely once you’re in the analogue domain you’d use analogue effects, otherwise what’s the point, you may as well stay in the digital domain.

Still, thanks for sharing, this is interesting.

There are lots of analog effects…EQ, limiters etc. But when it comes to reverb, delay, harmonizing etc…they are all DSP enabled.

Dave, it’s like Mark said: they are using the analog desk for familiarity, it is nothing to do with quality.

That and there’s no doubt that analog consoles have a distinct sound. Cookie at Blue Coast mixes her DSD recording in the analog domain and then recaptures the stereo output at 5.6 DSD.

The responses in this Readers Comments seems to indicate to me that “best practices” only happens when it is convenient or not too difficult.

The more disturbing thing (to me) that Mark said was: “It turns out that virtually all artists, engineers, producers and label folks have no clue what high-resolution music is. And they can’t care about something that they aren’t aware of.”

Yes, sí, oui… everything will trickle down to the money anyway.

There’s one point you make here in which I beg to differ. Meybe in the States studios work in the box at 48/24, but that’s not the case aroun here. We’ve been recording 96/24 since, at least, 2007. And I don’t mean my studio. I mean all the studios around here that use PT.

Maybe it’s been so many years that our sessions automatically open in 96/24 that just now it dawns on me that we thought the rest of the worl were doing it that way.

All of our mixes are 96/24 and our finished stereo mix goes the same way. The mastering facilities around here -including the really big ones- start with that mix and send us back the mastered final in 96/24 AND 48/24… the we do whatever we please.

I don’t know if most studios nowadays rely only on digital processors, but we don’t. We use lots of analogue equipment, vintage things that alter the signal on the input path as inserts and goes directly to PT-HD, so, yes, there’s a lot of analogue work done in the process (EQ’s, compressors, opto-gates, all mic preamps… you name it) and all of these things, in the end gives each track its own signature that, when mixed, has the character you “painted” in your mind while recording.

I know, for sure, that many studios in LA, San Francisco, Nashville, NY and Mexico, by default, record everything in 96/24. Even when we have the capability to record in 192/24 we decided long time ago not to do it because we were stressing our systems to their max and sometimes they crashed. Not to mention we needed a closet full of high-speed RAID’s.

Carlos…the gist of the conversation is that the systems clog up because there are so many tracks. Yes, there are a large number of studios running at 24-bits but fewer that have adopted 96 kHz as the standard. There are many…but I don’t think it’s the majority yet.

“Some engineer believe that because they’re using analog signal processing and faders, they results are high-resolution.” Get them out of the gene pool!

Obviously, you can’t increase bandwidth by utilizing setup in figure 2 unless you like the potential harmonic distortion of the analog stage. I can forgive artists, producers and label folks for not grasping real HiRes audio. Heck, we still don’t know what to call it or what it really means. However, an engineer should know better and educate the artist and producer. I’m not sure what you can do with the label folks…

If it goes through an analog stage then all of the bad “digital voodoo” has been removed….don’t ya know?

Of, course. It just smooths all of the jaggies back to their previous analog incarnation.

Blaine,

You would be surprised at the quality, dynamic range, and noise floor of today’s finest as well as yesterday’s best (with a bit of modification) can produce.

When using ultra high end converters and staying at 96/24 the entire time, going back into the analog realm with some of the tracks, or to bring in the attributes of something like real reverb, i.e. acoustic chambers, can sound quite beautiful.

We have a lot of incredibly talented engineers who utilize hybrid mixing techniques, and it might be possible you have heard it and not known it.

Unfortunately, with all the technology and higher track counts available today comes a much greater degree of poor quality mixes.

A good part of this may be attributable to a much lower cost of entry into the “Recording Studio”, and the resultant position of recording engineer.

The beauty of it however, is we have a chance to hear a much broader selection of musical talent than ever, and the possibility of finding those rare diamonds has never been better.

While I am deeply in love with incredibly well crafted recordings played back on superb equipment, without great musicians and the performance that stirs emotion from our very being, what would we have with HRA?

A brilliant and enlightening article, thank you Mark. Not to mention shocking and disillusioning.

Do you think your article pertains right across the music spectrum? Or, is it mainly focused on the pop-rock area, i.e. “The Charts”?

I am thinking about the classical and jazz and acoustical/folk sectors. Do you think they are any different?

A very interesting point. This applies mostly to the commercial record business. There were a number of well know engineers in the audiophile space on the call today…in fact, more than pop types.

Here’s an interesting data point that I recalled reading some time back – Anderson records Patricia Barber’s excellent sounding ‘albums’ using PT at 96/24 and then mixes externally on a Neve console. His work sounds great to my ears.

His recordings are wonderful…but by mixing in the analog domain, he does pass through additional converters.

In an interview with Barry Holden, VP of Classical Catalogue at Universal Music Group, posted by Jason Victor Serinus (Stereophile) on March 22, 2014, Barry Holden stated that “Two years ago, we told our labels not to record in anything less than 24/96.”

http://www.stereophile.com/content/universal-music-groups-blu-rayhi-res-initiative

That’s exactly the point of my post. What gets delivered to UMG or any other label may say it’s 96 kHz but the production may have started as something less.

Mark:

My old copy of Adobe Audition [ver 1.5 -> AKA Cool Edit Pro] provides a ‘spectrogram’ of a digital audio recording and it appears to show the true frequency content of a recording graphically similar to the ones you show with the lasted version on your website.

Q: Is this a ‘necessary and sufficient ‘ condition for determining the ‘provenance’ of a recording? Can I rely on this tool to tell me if a recording has been ‘up-sampled’ from a lower resolution by the absence of any frequency content above the Nyquist frequency [1/2 the sampling freq] OR are there ways to artificially add higher frequency content?

It is a very powerful tool and can assist in the determination of a file’s provenance. But there are ways to change the spectra of a given file. I’ll be writing about Clari Fi from Harman and previously I talked about Sony’s DSEE process back in early January.

What will we do without your practical knowledge. Yes we are in trouble getting the true 96 kHz. Alphonso Soosay

Mark,

I meant to ask you…

Since everything seems to be working towards the 96/24 res. as a (hopeful here) standard, is there a particular reason RedNet systems all run at 48/24?

Thanks,

John

The reason is the same one that limits most of the plug ins from Universal to 48 kHz…the processing power to do all of the things we would want isn’t there. The SSL system is simply working within the domain of most studios current infrastructure.