Why I think HD audio is irrelevant

The title of today’s article doesn’t actually reflect my own experience and position, but the author of a blog post with this title did alert me of his recent blog post. I was intrigued and made my way over to his new blog site to check it out. I was perfectly willing to take his test and see if I could tell the difference between HD-Audio vs. AAC 256k VBR…one of his tests. I’ll provide a link to his page at the end of the article so you can check it out yourself. But read the entire post before you do…the test is another example of a fatally flawed test (just like the Boston Audio Society research project). I’ve been in touch with the author and we’re going to sort out how to improve his approach.

According to his website, he’s a science teacher in Barcelona, Spain and is very passionate about music. However, I’m not sure how much of an audio engineer he is because he displays spectrograms on the site and talks about polarity etc. , but he didn’t recognize his selected source file as an original DSD recording that had been converted to a 192 kHz/24-bit PCM file…along with all of the ultrasonic noise! He believes, “The signal we start with is an HD audio file of the highest possible quality, sampled at 192 kHz and 24 bit.” I was immediately suspicious about this.

So what’s the problem? I clicked to the test page and chose to download the files rather than stream them through my computer and so that I could confirm the provenance of the samples. Gabriel, the author, states, “In the following section you will be asked to compare Master quality audio to lossy iTunes Plus. For the test, I have used master audio quality tracks you can legally download for free from the Internet. Then, I have compressed sections of the originals to AAC 256 kbps using iTunes encoder (iTunes Plus option). Finally, I have edited sample tracks that consist of two sets of about ten short sections of the original and of the compressed tracks, placed side by side so that they can be easily compared.”

Once again, the provenance of the original recordings is suspect. We don’t actually know if the source files are, in fact, high-resolution audio. Just because someone says that they are and the sample rate is 192 or 96 kHz, doesn’t mean that they are high resolution. This is the same mistake that the BAS researchers fell into.

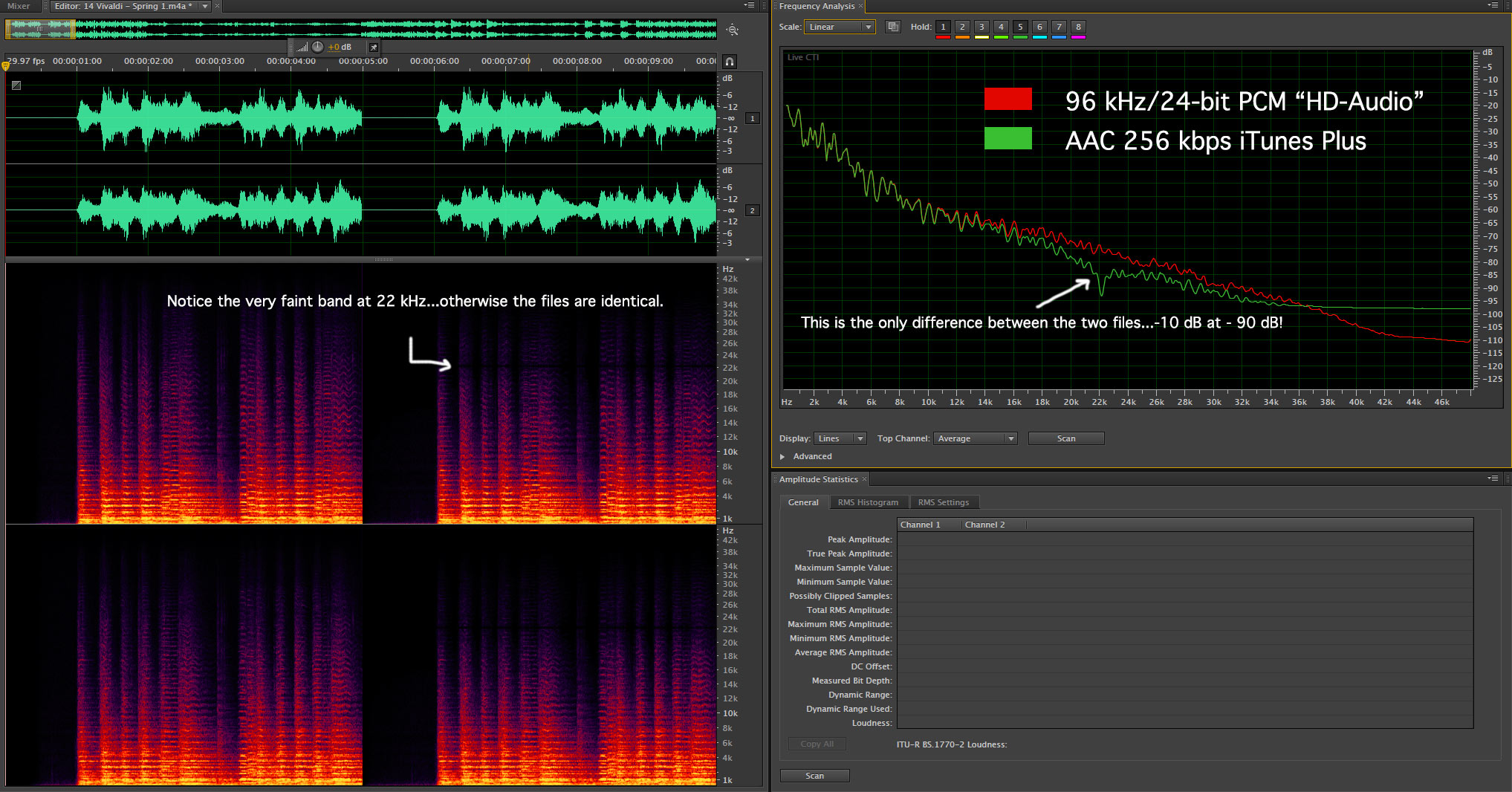

I played all of the files and I sure couldn’t tell the difference between the A and B sections (which were painfully short…the best way to do this comparison is to be able to switch instantly between the A and B versions). As I looked at the spectra of the a few of the files, it became painfully clear why no one would be able to detect a difference…they were virtually identical! Here’s a plot from one of the classical selections:

Figure 1 – The spectra of the Vivaldi “Spring” segments A and B. Notice they are virtually identical. [Click to enlarge]

This is a prime example of why it is so important to get meaningful information about the perceptibility of high-resolution audio. This type of causal experiment causes more confusion than it provides information. The people that took the test and couldn’t tell the difference don’t know that the stuff they heard wasn’t accurately testing their hearing…or the perceptibility of HD audio over compressed audio.

That means that there are new audio enthusiasts that will go around claiming that high-resolution audio is “irrelevant”, when we really haven’t put it to a rigorous test. The jury is still very much out.

Here’s the link if you want to visit the site for yourself.

Have you seen this test? Love to hear your comments.

http://archimago.blogspot.com/2014/04/internet-test-24-bit-vs-16-bit-audio.html

More of the same. If the samples that are played don’t exhibit any differences for the quality (in this case dynamic range) that you’re trying the test for, then how can you claim it’s a valid test? In this case, I downloaded the files and the largest dynamic range present was about 60 dB…just 10-12-bits of PCM would more than cover that.

Hi, I have a question.

I’ve been following rather loosely, but I wanted to know what you thought about traditionally overdubbed, tracked music techniques. It seems that if I did a direct-to-digital stereo recording at 24 bits and 88.2 kHz or more, no compression, that qualifies, right? But what a usually do is 24 bit 88.2 kHz recording, and then overdub, equalize, compress, etc, all in the digital domain, all with 64-bit processing, all at the original sample rate. The output mixes are 24-bit, 88.2 kHz. And I do the compression because I like the effect, as almost all pop/country/rock/jazz producers/engineers do. Is that still hi res at that point, before it goes to release?

Commercial recordings subscribe to a completely different set of aesthetics, expectations and market realities. By all means create what you and the artist want with regards to sound. When you’re done with the mixes…and you love the sound. Don’t let the mastering engineer smash the life out of the tracks. Maybe you create two masters…one with whatever dynamics are in the mixes and another that is the radio friendly (and listener unfriendly) versions.

Hey Mark – This is EXACTLY why HD-Audio, by any name, is irrelevant. There are too many people who are dishonest and are selling the same files in bigger buckets (your analogy). I am sure that many audiophiles have done like I have done and purchased ‘digitally remastered’ recordings only to find that they sound the same. Why should we believe any of these folks again? Burn me once, shame on you; Burn me twice, shame on me. I do NOT intend to get fooled again. If it ain’t multichannel, I just stick with standard CD quality, thank you very much. Sorry. – – I do not mean this personally, I know your stuff is on the up and up and perhaps new stuff from major labels will be done in hi rez, too. But, another stereo copy of [fill in the blank], I’ll pass.

I get it and generally agree. I wouldn’t call high-resolution audio irrelevant though. It’s going to play a role for those who seek a different experience.

Why not do a new variation of the study carried out by Meyer & Moran?

The question if we are actually capable of telling CD audio from HRA is certainly a valid one, and perhaps an even more pertinent one now that thanks to Benchmark’s new AHB2 Power Amp and Mola-Mola’s kaluga monoblocks and Makua PreAmp, there actually is a pair of Amps that can deliver HRA to a pair of ideal speakers.

With true HRA recordings made and specially scrutinized for the test ,as well as DSD and PCM recordings from prominent labels that claim their recordings to be HRA, and with an ideal playback system and conditions. And why not include 5.1 playback as well, with DVD-A and BluRay audio?

This would be an interesting and relevant test now that we have the conditions to actually carry it out. Come on, Mark, why not? You have exactly what it takes to carry this test out (except perhaps time, lol.)

Cheers

Not clear why ultrasonic noise in DSD is such a big deal ? It should be trivial to filter out it out with a low pass filter… either in the player or in the encoder. Isn’t it ?

Because it’s not there in acoustic space, most of the time it isn’t filtered off, it requires a lot of fancy processing to make the audio band clean and because the ultrasonic partials MIGHT affect our hearing.

You mean.. if all the ultrasonics were filtered out, it would also remove all the good/useful ultrasonics along with the ultrasonic noise ?

Yep, the spectra above our traditional range of human hearing should continue to diminish in amplitude as it does in nature. By shifting the in band noise out of range in the ultrasonic range we pollute an area that “might” be important.

Mark

I followed your link to this guy’s site and found your comment and his response, asking how he could get the free files – pity you didn’t explicitly include your website’s name or url in your post. (He – and his readers – might not think to click on your name and be brought here.)

In any case, I’ll be interested in his reaction after you get back to him with and tell him how to get your free samples!

Phil

I’m now convinced that DSD is a “stalking-horse” for analog holdovers who love “warm” sounding recordings – in other words, recordings that don’t have a lot of high frequencies.

So long as they can convince testers to use DSD recordings as their “ultimate-quality” source for comparison testing, PCM won’t be able prove its worth.

The argument you’ve got to make, over and over, is that since DSD requires shaving off pretty close to the same high frequencies as CD audio, any comparison against CD that includes a DSD step makes the test meaningless.

Once DSD has shaved off the high frequencies, a later PCM step can’t put them back in.

The only way to do a valid test of the worth of high sample rate PCM is to have that branch of the test stay in high sample rate PCM throughout.

He and I have been corresponding. He sent me a bunch of spectrograms of so-called HD-Audio tracks…none of them were. These were from free samplers etc.

it is something like is multi-ampling; is it better than single ampling? Yes also if “YOU” do not ear any difference.

The fact is that is what is called “noise’ is not only “noise” but “harmonics” that most people cannot ear directly, but they create ambient and stage as they beat and interfere with the other sounds in your room.

Many audiophile systems response range extend well beyond 20 kHz up to 250 kHz in some cases; loudspeakers systems have the capacity to arrive around 40kHz or can be completed with a “supertweeter” to extend the basic range.

All this not to perceive directly the sound above 20kHz (16KHz or lesser for most of us if aged) but the interactions with audible range that in the 20 to 40 kHz range keep still some effects.

Therefore the instrumental analysis reveals in the last track and make audible those harmonics that has been called noise, but only partially are.

Finally is better to cut all frequencies recorded above 20kHz or not? If you do not cut them the sound is the same or better? The answer is: it is surely better also if you cannot ear any audible difference.

Mark,

He should start with one of your wonderful 24 bit 96 kHz recordings. Of course there is no audible difference in what he has done!

Also, people will not here a difference even with well done comparison files of the playback equipment in not up to the task. Weakest link limits!

Roger

I’ll be posting a project that AVS Forum and I are doing together tomorrow…stay tuned.

I am the guy from Barcelona who motivated this article. I am not a sound engineer, but as a physicist, I possess sufficient background knowledge to make meaningful observations on the subject discussed here (so I hope you will excuse my ignorance about DSD vs PCM).

Let me start by clearly stating what I consider the biggest strength in my article: there is a simple and powerful method (null testing) to test our ‘beliefs’ in the audio world. If you are familiar with editing audio sofftware (like Adobe Audition, for example), you don’t have to take my word for what I say, just do as I tell you to find out for yourself. Also there is plenty of tutorials on how to perform a null test in the internet.

Based on the results of null testing, I can confidently reply to Mr. Waldrep’s criticism on both, my article and my (discontinued) HD vs AAC 256k VBR test.

He cleverly underlines a mistake I made when I wrote “an HD track of the highest possible quality, sampled at 192 kHz and 24 bit.” The spectrogram in my article clearly shows that it is not of the highest quality (there is noise in the upper band) but it leaves no doubt it is a perfectly valid choice to prove my point, because it contains frequencies well above the 22kHz limit that are part of the recorded signal, which prove that the track is indeed sampled at a high rate, no doubt higher than 44.1k.

Mr. Waldrep provided me with an HD track of his own. I performed a null test with his and got exactly the same results: no audible content in the nulled track. So 192k or 96k, DSD or PCM are not a crucial point here. But, again, if you have your doubts, I encourage you to do the testing yourself.

For me, recorded music (as opposed to live music) is about what we HEAR, and that is why I still think HD audio is irrelevant in this sense. If you tell me that you can ‘hear’ the difference between HD and CD, I will null test whatever you listened to and consistently show you that there is no audible difference there, it’s that simple. If you tell me instead that you can ‘perceive’ the difference in some other way, I will ask you to prove it through blind testing. (So far, nobody has been able to do so in very well executed studies). If we humans can perceive ultrasounds, say, through our eyes, that’s fine with me, but I am not interested, and I think it is not the point for HD enthusiasts either. If you tell me that HD audio makes you ‘feel good’, because your HD track holds ‘all the data’ the microphones picked up at the time of the recording, that is fine with me too (but then, I would ask you, why stopping at 192kHz? Follow this path and wait long enough, and you will have a hoard of outraged 384k or 768k followers that will angrily criticize those ‘poor quality’ 192k recordings of the time!)

As for the HD vs AAC test I devised, I wanted to show how narrow the difference in audio quality is between these two formats with the HD tracks I had at my disposal (All of them come from HDtracks 2013 and 2014 samplers, which you can download for free). It was my first test, and I was not totally happy with it. I had to make compromises due to the limitations of the Adobe form tool and the requirement that the test be taken online (for example, the approach with short clips did hurt the ‘musical’ experience, as I soon realized) but there is a perfectly audible difference in every single clip I chose, supposing your ears are good enough to hear it (they should if you claim you can tell CD and HD apart), and again I can prove this through null testing if anyone is interested. I explain the reasons why I have interrupted the HD vs AAC test in my blog for further reference, but suffice it to say that it is not due to Mr. Waldrep’s critics.

Finally, I think my ‘casual’ experiment is indeed meaningful, and I want to insist that it depicts a powerful method for proving or disproving claims of the sort we constantly hear in the audio world. I am not very optimistic, though, about the number of HD audio enthusiasts who will embrace it, as it painfully debunks their dearest hopes.

I do endorse the sort of null testing that you advocate and have used the same procedure to show that expensive digital interconnect cables or optical disc treatments do absolutely nothing to modify the sound of a particular track. But your application is somewhat different and stretches the concept beyond its limits…because of the sample rate conversion processed that must be applied to the tracks. There a myriad of details in the SRC algorithms that guarantee that the files will be ever so slightly different. I suspect that why you demonstration contained so much in band audio when in fact, there should only be high-frequencies left behind.

The key piece of research that needs to be done with great rigor is still not been done. It’s simply not possible to say there’s no “audible content” in the nulled track until we do this level of testing. Your definition of “hearing” may differ from mine. If I can measure changes in the brain between a played track at 96 kHz/24-bits and one at 44.1 kHz/16-bits, then I believe that we should record and produce at the higher sampling rate. If it turns out that there are not changes or no one change reliably identify differences, then I’ll write that up and move on. Your test remains questionable for me. I’ll reserve judgement for at least a little while longer. I appreciate your thoughtfulness and encourage continued polite debate.