High-Resolution: Does it matter? Part I

I am a member of several audiophile FB groups and regularly post comments when I think I have something to offer. Recently, someone asked whether high-resolution was really worth it? Several others chimed in taking a variety of positions. But there was one comment from a well known audio expert, that contained a link to an article that he had written about high-resolution audio. He takes the position that it’s unnecessary and that “repeated studies” have shown that listeners cannot tell standard-res from high-res audio. I clicked on the link and read his brief article. I disagree with his conclusions that ultrasonic frequencies have no effect on “in band” frequencies and that 24-bits is virtually identical to 16-bit audio, but that’s not what triggered this post.

To reinforce his position, he prepared a group of four audio files so that readers could compare standard-res vs. high-res for themselves. He got a hold of a couple of “HD recordings” — one a pop tune and the other a classical track — and processed them to standard-res and then placed the down converted file in a 96 kHz bit container. This results in two files that are exactly the same size. Those wishing to participate in his casual survey can listen repeatedly to the files on their own system and then report back what they think are the standard-res versions and which are the high-res versions. So I downloaded the zipped file and set about to make my own comparisons.

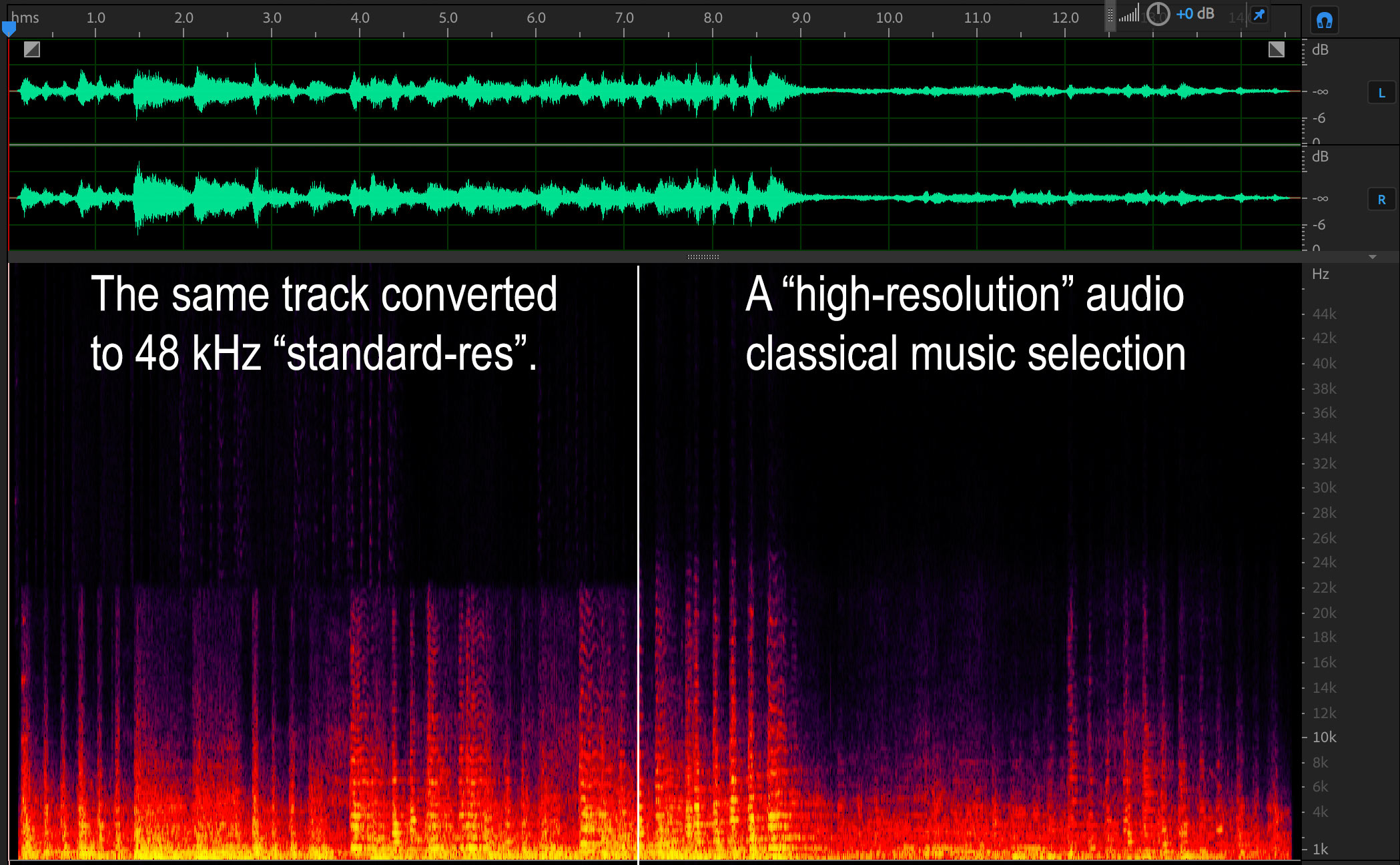

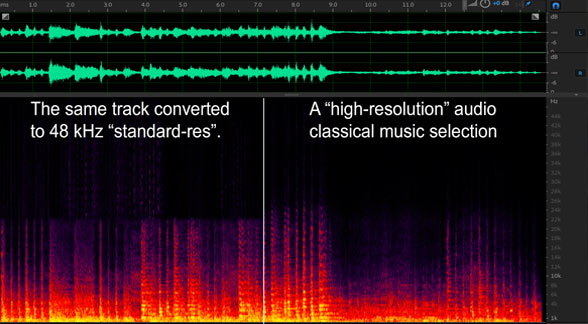

Wouldn’t you think that a test of this sort would require that the source recordings actually be high-resolution? The original 96 Khz/24-bit bit files have to “have content out to the upper limit of a 96 kHz sample rate”, which means there would be partials up to the Nyquist frequency of 48 kHz. So the first thing that I did was analyze the files using Adobe Audition. I wanted to confirm that the examples sere worthy of comparing. Well, I wasn’t surprised to discover that there was virtually no difference between the A and B version of the files! Take a look at the spectra of the classical example from the download.

The “so-called” high-resolution track is on the right hand side and the downconverted 44.1 kHz track is on the left. The standard-res version should have a sharp upper limit at around 22 kHz but it shows some phantom ultrasonic stuff. The right hand spectra does extend slightly higher — maybe to 24 kHz but there’s not a lot of ultrasonic material there.

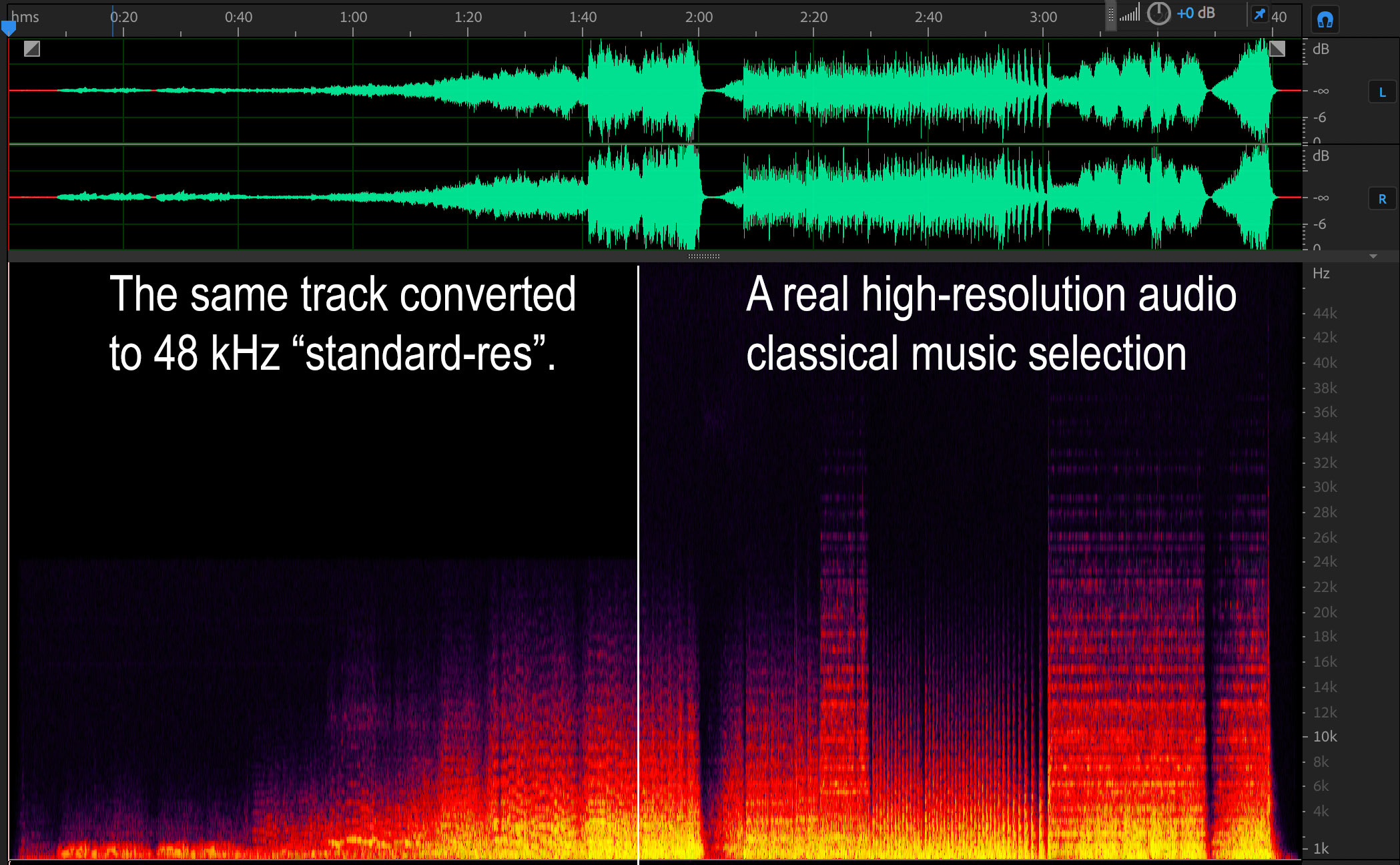

I know what a real high-resolution spectra looks like. There are lots of them in previous posts and lots of them in the Music and Audio book. I have included the spectra of one of my high-res classical tracks below for comparison.

The right half of the spectra shows a huge amount of ultrasonic audio — well past 35 kHz. This is the finale of Stravinsky’s Firebird Suite and is a particularly loud section. The left hand spectra is much quieter and doesn’t actually pass above normal CD frequencies but does have a sharp cutoff at 22 kHz.

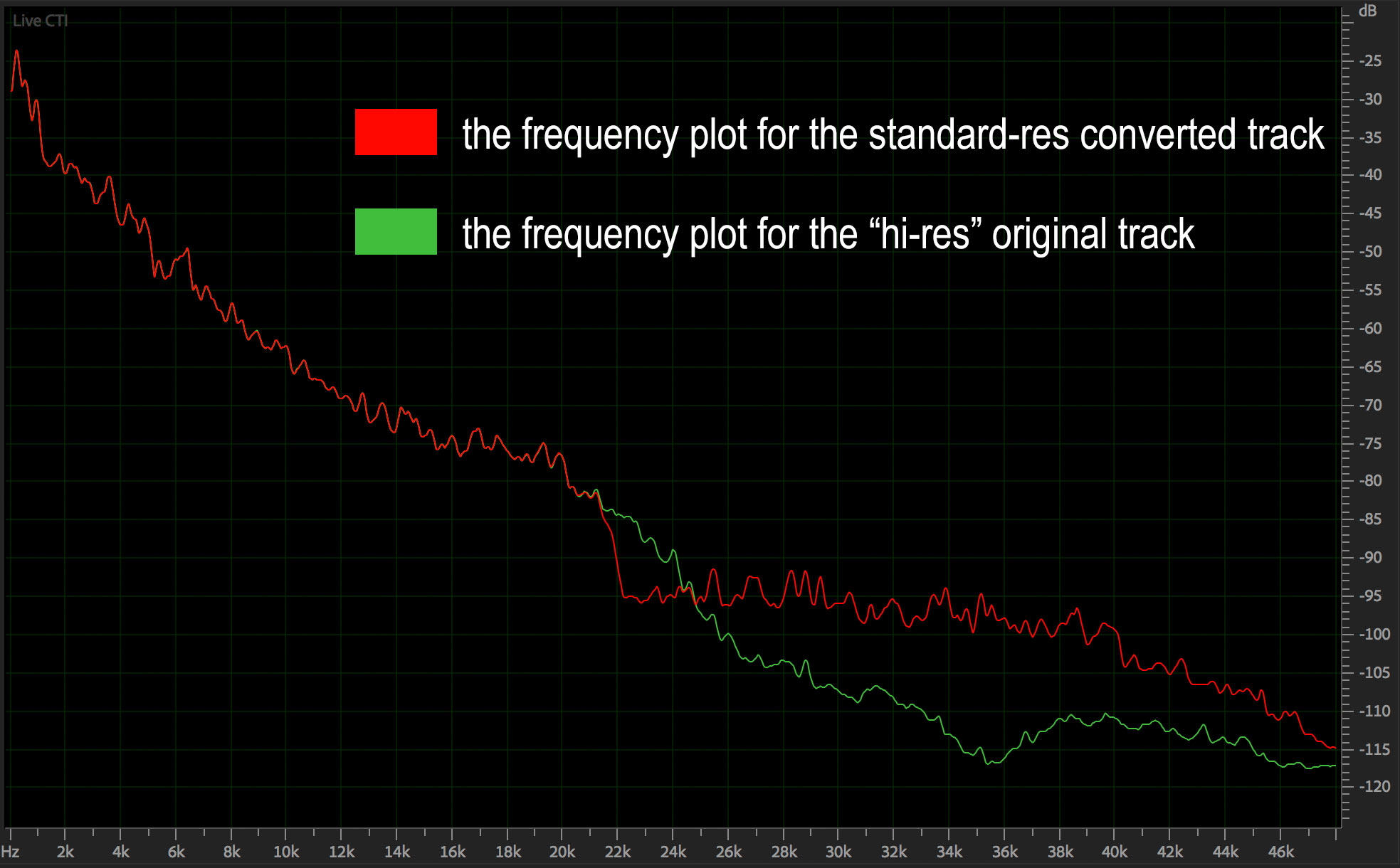

A better way to illustrate the amount of content provided by high-res sample rates is to scan the entire file and plot the amplitude vs frequency of the entire selection. The plot below is the classical piece I downloaded. Notice that the standard-res converted file actually contains more ultrasonic content than the original! That’s not supposed to happen. Now maybe this is the result of the software that was used to make the conversion but it definitely shows lots of content above 24 kHz (I converted down to 48 Khz). The red line should drop straight down — and it doesn’t.

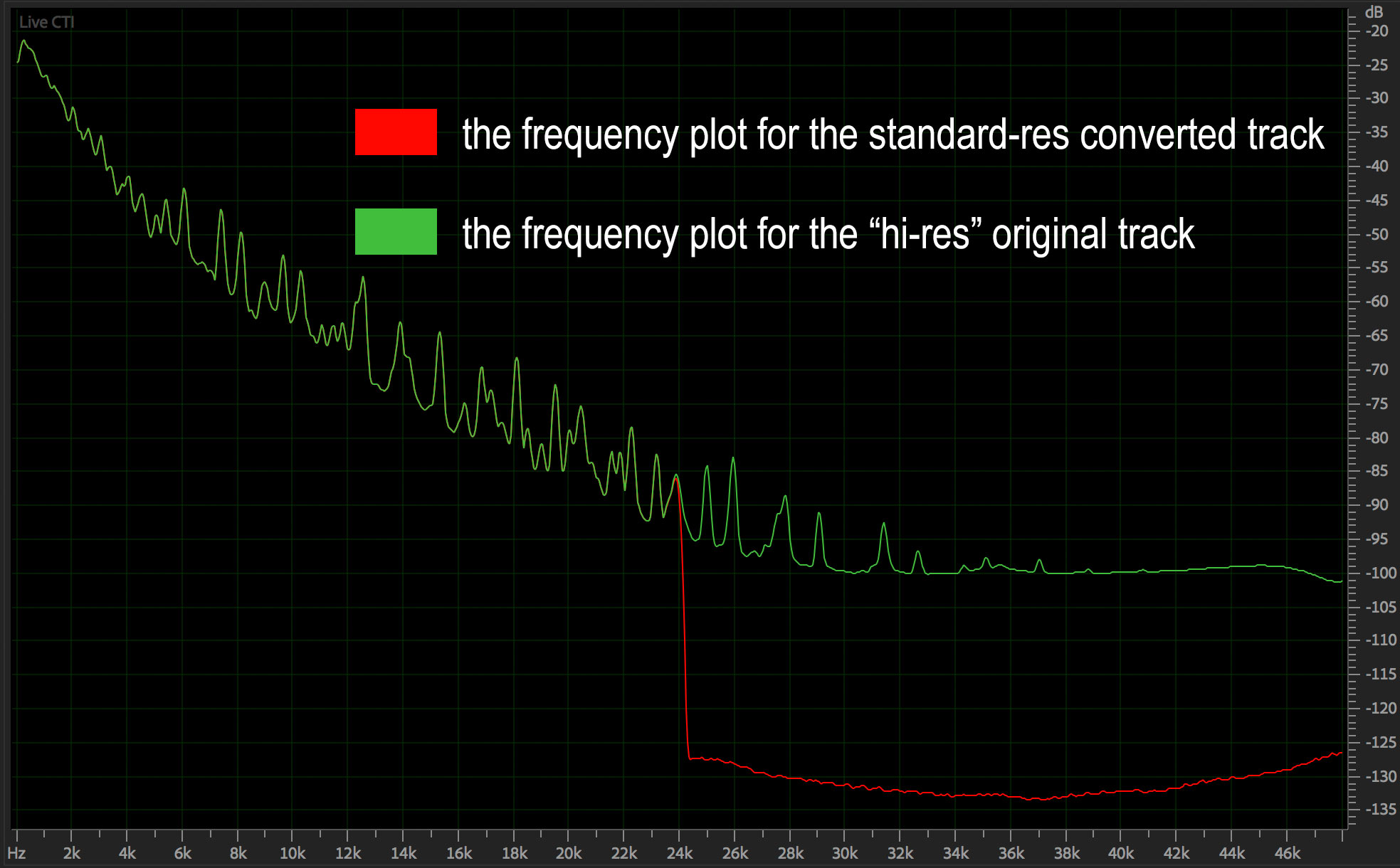

Take a look at the conversion that I did on my file. It shows the proper relationship between a real high-resolution track and a CD version. The red line does drop immediately at 22 kHz.

So I wonder what kind of results the author of the comment has received from his survey. It would be absolutely impossible for anyone on the planet to perceive any differences between the two files he provided. I’m not going to claim that listeners would be able to perceive differences between my A and B versions, but at least I know that substantial differences do exist. As I said, I wasn’t surprised that the audio he acquired didn’t measure up. There isn’t a lot of really good high-resolution content available from any label. I’m in a unique position because my entire catalog boasts fidelity greater than vinyl LPs, analog tapes, and CDs. But that’s not the reason I advocate for real high-resolution tracks. There are other “non audible” reasons why the world should adopt 96 kHz/24-bits as the minimum AND the maximum specifications. We’ll talk more about that in Part II.

Hi Mark.

For the last 2 years I have analysed digital music using MusicScope from XiVero.

Oliver Masciarotte reviewed this product in audioxpress, October 2016.

He wrote:

“XiVero bills MusicScope as a “…high precision measuring tool that works as an Audio-Microscope

to visualize the different quality aspects of a music collection.” Far from a singular tool, MusicScope is a

multi–tool of epic proportions.”

Only a handful of my HD albums have displayed true HD properties. Most stated 24bit files are far less – usually 16 to.18bit and let’s face it . 24 is a practical fantasy for playback.

DSD has heaps of HF hash which carries over into meaningless noise when converted into PCM…just a lot of wasted data.(and money for the bigger data download) Some of this HF extension becomes a joke when the recording microphone response is incapable of reaching into ultrasonic frequencies anyway.

And what of older analogue recordings digitised into these “HD” re-releases? Some of them barely meet the Redbook standard.

However some HD recordings are the real deal. AIX of course are and measure accordingly.

On concentrated listening to such HD recordings I can sometimes hear differences compared to a 44/16 down conversion but these are very subtle and easy to miss. Occasionally I find it difficult to hear a difference between a CD file and 320kbt MP3, usually on a piano recording where natural HF is less.

I have also seen sub-harmonic spikes appear in the audible band presumably generated by excessive non-musical content in the 40-80kHz region.

So my opinion on all this relates to the quality of the recording and mixing process in the first place coupled with the abilities of the listener and playback system to resolve the improvement the HD format provides,

albeit subtle and easily under heard.

Regards. Rod.

PS. Love your book Mark.

Thanks Rod. Musicscope is a very nice piece of software and allows users to analyze the files they download and play. And you’re right about the paucity of real high-resolution audio projects. The HD moniker is used to promote sales rather than a true marker of increased fidelity. That’s why I use the term “potential” so often. The entire production chain has the potential to meet or exceed the limitations of human hearing but the artists, engineers, producers, and labels choose not to exercise that option. There metrics are different. And that’s OK. I started my label to show that it’s possible to move beyond vinyl LPs, analog tape, and CDs. I’ve shown that.

Hi Mark,

I am totally flabbergasted that we still have this endless tedious discussion going on. Will somebody please commission a properly regulated double blind listening test with a sizeable number and decent cross section of ages and gender people and get some proper data on not just the immediate “can you tell the difference?” question between various sample rates/bit depths but as importantly I feel the effects of prolonged listening to different qualities of digital music. My own experience which is very limited and not with what could be described as high end audio gear is that playing HD music, wether old analogue recordings transferred or new recordings captured in HD such as your own, are far more pleasant for want of a better word and not mentally fatiguing in the way that ‘CD quality’ seems to be to me. I think you made the point in one of your lectures that mastering CD’s in your own words – you were “toast” at the end of the day, compared to 96/24 were there is no problem.

Yes, it goes on an on without resolution. The evidence and studies that I’ve examined do show that people can’t tell the difference between a high-resolution file and a CD version of the same file — even when you start with a bona fide HD file. But it doesn’t matter. As audiophiles, we have the choice to seek out fidelity or a particular “sound”. There are formats and distribution methods that cater to all tastes. But it’s simply too easy to record in HD and deliver in HD to ignore it. Further studies may show that there is a “new component” in play that warrants moving up to 96/24. And I know that my recordings do not lack for anything in terms of “time smear”, pre-ringing, distortion, “low level details”, “soundstage” or anything else, so why continue to focus on specs and new formats when all we really need is better recordings.

Hi Mark,

You said it – it’s not majorly difficult to do, so do it – give it the benefit of the doubt. I struggle to hear any real difference between the CD and a 320 AAC rip of the same in terms of basic listening but after an albums worth of the AAC my brain says “please turn this off’ and I would be fascinated in hearing others views on this point….

If his files actually are published and sold as high res audio, then he doesn’t really have to prove that they contain substantial ultrasonic music content to still be right: what gets sold as “HD recordings” is what he is using.

I understand your point, but he is kind of right if real life products typically don’t have ultrasonic musical content. We all know your products are hardly typical.

They’re not his files. I get the sense that he acquired them from a third party — but I’m not sure. He was the one that validated them by saying they have musical content all they way to 48 kHz. My point was that pitching a survey based on files that do not have a difference is a bit sketchy. If you remember the casual study that Scott Wilkinson and AVS Forum did using my content AND limited to people that have highly resolving systems — it did show that people could do better than random.

Hi Mark,

Like this post, back to the real issue of Hi Res and how it is indeed pedalled as a sales tool rather than used to bring greater fidelity to the consumer.

Looking forward to the next part of this piece.

Thanks

Gordon

Hi Mark,

I have followed all of the information that I could find around this topic for a long time. I completely agree with your research. I am certainly not an engineer and do not have any professional background in this field. I am just a audiophile with a big interest in digital music. I have been the victim of many so-called Hi-Rez files purchases that never sounded any better than my Cd’s. Thank you for providing your research findings and being the consistent voice for us.I now have a very good understanding as to why I have spent money unwisely. I find ridiculously hard to even find out the original source material of the HD files for sale of the internet. This has paused my purchase habits for new HD files (what a shame). I believe this HD music file business to be very ingenious and somewhat dishonest. I have purchased some of your AIX HD recordings that are fabulous (thank you). I am just trying to collect digital music files representing the closest representation of the best ordinal recordings that I can find. I may not be able to discern between the files, however, I would know that I have collected the best potential I could find!!

Thanks Curtis. We all would like to get the best captures of our favorite music. However, many — if not most — of the new transfers to “high-res” audio files or MQA’d files come from second rate safety copies. Mastering studios struggle to find the original masters. Many times they don’t exist of have been worn out.

LOL, wow Mark, you really missed the boat on your analysis of my “high-res” file comparisons. First, I would not have minded if you mentioned me by name, but that’s a different issue. Do you plan to open up your web post for comments? I’ll be glad to chime in and have a discussion. Or maybe you can post in one of the Facebook groups we’re both in and discuss it there.

I promise you the source files I started with are absolutely “high res” with ultrasonic content. But you are correct about my adding “phantom” ultrasonic content to the down-res’d version. Otherwise people could easily do what you did and look instead of listen to see which files were stripped of their ultrasonics. I emailed you an FFT screen cap of the classical music HD source file, clearly showing content out to the 48 KHz limit of a 96 KHz sample rate.

Of course, and I’m sure you know this, a proper blind test done live (with people present, not online files) switching a low-pass filter in and out of any source you’d like will settle this in 20 seconds. :->)

Thanks for coming by Ethan. I didn’t want to mention your name with regards to these files because it didn’t seem to matter. The point I was trying to make is more important than the people involved. The comparison of your “high-res” files and those that I have was dramatic — I think you would acknowledge that. I haven’t looked at the FFT you sent…in fact, I haven’t received it (please send to mwaldrep@aixmediagroup.com). But the analysis that I did of the classical file is severely lacking in ultrasonic content. The plots in the article are real — I didn’t alter them except to weight the frequency scale somewhat. Don’t know if your analysis is different.

In the end, I generally agree with you that there is virtually no perceptible difference between a standard-resolution file and a bona fide high-resolution file. But there are aspects to the production process that make using 96 kHz/24-bit PCM a very good thing. So delivering that initial high-resolution quality to end users gives end users a better listening experience. If I were going to do a casual comparison/test, I think using more dramatically different materials would be necessary. I analyzed all four musical examples you provided and found them insufficient to make a good test IMHO.

Let’s formulate a comparison like the one I did with Scott Wilkinson of AVS Forum. For his test, I prepared a few of my tracks exactly as you did but without the additional “phantom” ultrasonic content. People with really good systems did actually beat “random” choice by a substantial amount. And I’ve gotten 5 out of 5 right in my own studio when listening to high-res vs downconverted files — but I know my tracks and have a system capable of delivering high-res music. It’s not an argument that I have any longer. What matters is the quality of the original recording. Is the dynamic range and frequency response equal to (or better) than a live event? We have the potential to make recordings that good.

I think the next step is for me to get my hands on a 20-second excerpt of a file you claim has more ultrasonic content than my files. Then I can apply “my process” to it and see if you (or anyone) can hear the difference. Both of mine came from legitimate HD audio sites, and the FFT I sent (to both of your email addresses) clearly shows content out to 48 KHz. But the real issue is whether anyone can ever hear any difference between any “high-res” audio file and a 44/16 conversion. I think we both know the answer is No, so that begs the question, “Why is this even still being discussed?”

You asked about my survey results. This is what I posed on Facebook on February 16, 2018:

Back on January 2, 2018 I posted this article with files for the “High Definition Audio Comparison” linked below.

http://ethanwiner.com/hd-audio.htm

Following are the results based on 80 people responding. Many people said they couldn’t tell a difference, and so didn’t specify any choices. Even when people did specify a choice, many admitted they were mostly guessing. There are 2 Classical examples and 2 Pop music examples, for a total of 4 possible choices times 80 people = 320 choices.

Classical correct (out of 2×80=160): 47

Pop music correct (out of 2×80=160): 47

Acknowledged they can’t tell: 18

Got all four correct: 4

Got all four wrong: 7

I’m not a statistics guy, but with 80 respondents I think that’s enough to expect random success to be closer to 80 rather than the 47 I counted for both groups. So that implies there may be a perceivable difference between the files, and some people really did hear a difference but thought the processed files sounded “better” for whatever reason. Or it could mean my test is flawed. Then again, only 7 people were wrong on all four files, and only 4 were correct for all four. If there is a difference that only some (younger?) people can hear, I’d expect more to get them all wrong, or all right. I welcome input from anyone more skilled in statistics.

Thanks Ethan…I’d be happy to send you a file with lots of ultrasonic content. I’ll send it via WeSendIt. As for your results, I think they should be discounted because of the marginal “high-res” qualifications and the addition of spurious ultrasonic content that doesn’t belong there. The pop tune is even worse than the classical track.

Mark, why does a recording need a “substantial” amount of ultrasonic content to be considered high resolution? Many musical sources emit frequencies higher than 20 KHz, but the volume of those frequencies falls off steadily for most musical instruments. Since nobody can hear that high anyway, why do you think it even matters? I assume you’re aware of numerous tests when a 16/44 “bottleneck” was inserted into a hi-res playback chain, and nobody was able to tell? So you trying to discount my test because it doesn’t have “enough” energy above 20 KHz seems like grabbing at straws.

My Audio Expert book includes many audio recordings (Wave files) and FFT screen shots (in the print book) to demonstrate stuff like this. For one demo I recorded a tambourine at 96 KHz, and its HF content falls off by 40 dB between 20 KHz and 48 KHz. That’s about the same as my high-res files. The fie you sent me seems to have a disproportionate amount of HF energy, and the left and right channels are totally different viewing the FFT screen in Sound Forge. So I have to think that’s more suspicious than my files!

As to your second comment above, capturing higher frequencies as part of oversampling is not the same as *retaining* those frequencies during the production stage, through to the distribution medium. So no, I don’t think that’s evidence for HD audio being useful either. We can’t hear ultrasonics, and there’s good reason to filter them out – to avoid audible IM distortion farther down the chain or in our ears. Likewise for the noise floor which is perfectly adequate at 16 bits when playing normal program material in any normal room.

Logic and many tests show that 16/44 is all anyone needs for perfect audible transparency, so the burden of proof is on those who claim 24/96 (or higher) is somehow better. I’d love to hear a logical explanation accompanied by actual proof. This would be easy to prove with a comprehensive blind test! But after all these years we’ve never seen such proof, and I’m sure I know why.

You want to stop the capture and reproduction of music at the top of the traditional range of human hearing. You acknowledge that “many musical sources emit frequencies higher than 20 kHz” but then advocate to ignore them. Why? I agree that virtually all commercial recordings do not benefit from increasing the sample rate and adding 8 bits to the word size upon distribution. But a rare few do and my point in making the albums that I produced was to show this. And they do. My position is there are meaningful musical frequencies being produced into the performance space and I want my recordings to capture everything the microphones are capable of capturing. That is the meaning of fidelity. To accept a limit because our current understanding of hearing doesn’t extend beyond 20 kHz seems arbitrary and senseless.

I faulted your examples of “high-resolution” audio as being unsuitable for testing because they fail as high-resolution examples in my estimation. There is very little ultrasonic content as demonstrated in the spectrograms I created with Adobe Audition. We would want want the most dramatic examples if hi-res music to make a fair comparison, don’t you think.? The track I sent you and the spectrograph are accurate and real taken from an unmastered stereo recording at 96 kHz/24-bits. The graph I sent shows perfectly balanced spectra on both the left and right channels. Did you get the attachment? Did you see that they are balanced? Are there differences between the Audition plot and the Sound Forge spectra? I would love to see what you’re referring to that make my plot suspicious.

Oversampling is not the same thing as recording an original master at higher than 44.1 kHz. When I recorded all of my albums, I choose to run the sample clock at 96 kHz to gain the benefits discussed previously. It’s simply too easy to move a reasonable step up to potentially improve the fidelity of the master. The accurate recording and reproduction of everything that was produced by the band during the session requires more than Redbook specifications. I agree that 16-bits is more than sufficient for virtually all commercial audio productions, but it’s worth striving for the non-normal musical moments played by exceptional systems in amazing rooms. I never claimed that my recordings are normal. They very definitely aren’t.

Many audio professionals and experts — yourself included — are satisfied with the CD specification. I am not. It’s simply too easy to move to “high-resolution” specifications not to do it. There is simply no downside in the current audiophile marketplace. There may come a day when a rigorous double blind test is done with materials that do qualify as high-resolution. It certainly wasn’t the Meyer and Moran debacle. Other test have hinted at perceptible differences. But as I said, it doesn’t really matter to me.

The most important thing is to create lush, emotional, sonically wonderful recordings. If given the chance to capture real life dynamics and the full range of musical frequencies, I will do it every time. Thanks for taking the time to share your perspective.

Why do I advocate ignoring ultrasonic content? Because nobody can hear it! You say “a rare few” recordings benefit from HD capture, but you offer no proof. You are not the first person to claim HD sounds better than CD with no proof beyond subjective sighted anecdotal reports. I’m sure you know that only a proper blind test with a sufficient number of trials is acceptable. As you know, I’ve been at this for a very long time, but I’ll be clear: As soon as you or anyone else shows real proof that people can identify CD versus HD, I promise I’ll change my opinion immediately. You can’t just complain that my test or M&M’s were flawed. It turned out later that A FEW SOURCES that M&M played weren’t proper HD. But many were, and nobody could identify those either. So the burden is still on you.

The more interesting question for me is why some people care so much about this. Even if some small group of very young people can just barely tell the difference when ultrasonics are present, why is that so important? Why is so much emphasis put on this teensy aspect of audio, when there are so many more important things to worry about, such as loudspeaker distortion and off-axis response, and room acoustics. All I can suggest Mark is that you find a friend to help you do a proper blind test of your own. Or better, why do not a test along the lines of what I did, but using your own sources, and then we’ll have even more data.

I have one more comment I hope you’ll address: In your article above you said, “There are other ‘non audible’ reasons why the world should adopt 96 kHz/24-bits.” There’s only one reason I can think of, and it doesn’t apply to a music distribution media format. Related, I disagree with the claim that ultrasonic frequencies have any effect on in-band frequencies, assuming the audio is clean and not distorted. When I see someone make that claim I always ask for a logical explanation – I have yet to receive one. But I’m glad to hear yours.

So you don’t think that having a higher sample rate provides any benefit during the capture of a new recording? The fact that the corner frequency of the LPF used to minimize aliasing can be moved to a higher frequency and be made with a lower order is a benefit. The destructive and constructive interference patterns of ultrasonic partials can also cause “in-band” distortion.

I’ve seen perhaps hundreds of discussions on this subject. Here’s my take:

People on Ethan’s side often rely too much on the Nyquist–Shannon sampling theorem, whereas it has serious limitations:

(A) In its original form it is only valid for a function having a Fourier transform that is zero outside of the region of frequencies being considered for the function representation.

In practical terms, if you ever captured, let’s say, a typical rock band performance, using high-quality microphones and multi-channel 192/24 ADC, you’ll notice that the Fourier transform of some of the captured signals yields far from zero coefficients all the way up to 70KHz. Thus, attempting to capture the performance at 44 KHz makes the Nyquist–Shannon theorem inapplicable.

(B) The constructive proof of Nyquist–Shannon theorem involves Whittaker–Shannon interpolation, which is never precisely implemented in practical DACs, because it involves summation, or convolution in an alternative formula, over infinite series.

Naturally, practical implementations do work close to what Nyquist–Shannon + Whittaker–Shannon would yield even when the sampling frequency is lower than actually required, and the reconstructive interpolation is approximate. But how close?

For some types of signals it is close enough to be indistinguishable to an average ear from the original signal. For other types of signals, and to hearing systems with significant deviations from the average, it is not close enough.

About the notion that 16 bits are good enough:

(1) Let’s separate what the “dynamic range” actually means in different contexts.

In nature, the 120 dB range relates to the difference between the faintest analog sound an average healthy young person can hear, and the threshold of pain caused by another analog sound. When we talk about the perceived 120 dB range of 16-bit digital audio with noise-shaped dither, we describe the ratio of the loudest possible undistorted signal to the noise floor.

Neuro-physiologically and thus musically, there is quite a difference. If the digitally recorded music dynamic range is limited to the typical pop song’s 20 dB, then there is another 100 dB between the least intense sound and the noise floor. In that mode, the tensor tympani, stapedius muscle, and outer hair cells are in steady state, and everything is fine.

When, on the other hand, a person is listening to certain genres of classical music, or an acoustic performance conveying wide range of emotions, he or she may experience fragments ranging in level from whisper to barely tolerable: up to 60 dB difference. In symphonic music especially, the build ups can be long enough for the aforementioned human hearing compression mechanisms to start adjusting.

At the lowest level, even if the noise floor can’t be distinctly heard yet, the quantization distortions of what is now effectively an Atari-like 8-bit sound, can be heard by some, depriving the music of what is usually referred to as “air”, “authenticity” etc.

24-bit recording guarantees that at the lowest practically encountered signal level, even in the most dynamic music pieces, there is still 16 bits left to reproduce the quiet passages without perceived noise or distortions.

(2) Non-scientific “proof”. In addition to Mark’s, I read accounts by, and spoke personally to many other accomplished sound engineers. Without exception, everyone said that 16 bits don’t provide enough headroom for the music to stay “real”. They consider the 44/16 bits as just one of the legacy output formats they have to deliver their work in.

(3) Market “proof”. Up until around 2007, there was a race going on between pro audio gear vendors to come up with ever higher bit depths and sampling rates. It all effectively stopped at 192/24. That’s the max I’ve ever seen in recording studios, including incredibly lavish ones. The worldwide community of artists, producers, and recording engineers voted with their own money more than a decade ago that this is enough.

The 384/32 pro recording gear is available, albeit very expensive, yet as far as I know, it is mostly used to record very intricate compositions performed by extremely large symphonic orchestras. I didn’t manage to visit one of the studios that have such equipment. In consumer electronics, 384/32 DACs are widely available and inexpensive, yet they appear to only serve marketing purposes.

The science behind 192/24:

I don’t have a reference handy, yet I recall that one of the most comprehensive derivations of the needed resolution that convinced me, based on audiological measurements of healthy young individuals, concluded that the maximum needed is around 170/22. Naturally, it is not required for all genres of music and all individuals.

Most of music compositions can be recorded, mixed, and delivered just fine in 96/24. As to the pop music, 44/16 is definitely sufficient as a delivery format. In practical domain, based on my own recording experience, however limited, I could hear no difference whatsoever between 192/24, 96/24, 48/24, and 44/16 renderings of a composition involving a single vocalist and an acoustic guitar. However, I could hear a distinct difference of such renderings when the sound was produced by a large band playing program/symphonic rock.

I’ve seen perhaps hundreds of discussions on this subject. Here’s my take:

People on Ethan’s side often rely too much on the Nyquist–Shannon sampling theorem, whereas it has serious limitations:

(A) In its original form it is only valid for a function having a Fourier transform that is zero outside of the region of frequencies being considered for the function representation.

In practical terms, if you ever captured, let’s say, a typical rock band performance, using high-quality microphones and multi-channel 192/24 ADC, you’ll notice that the Fourier transform of some of the captured signals yields far from zero coefficients all the way up to 70KHz. Thus, attempting to capture the performance at 44 KHz makes the Nyquist–Shannon theorem inapplicable.

(B) The constructive proof of Nyquist–Shannon theorem involves Whittaker–Shannon interpolation, which is never precisely implemented in practical DACs, because it involves summation, or convolution in an alternative formula, over infinite series.

(C) Fourier transform is linear and time independent. If the signal it is applied to is also generated by a linear time independent system (LTI), then sampling at a lower than the minimally required rate is equivalent to low-pass filter + some aliasing, presumably of very low intensity parts of the upper spectrum.

In practice though, the system may be not LTI, as a microphone could be both non-linear and resonant at high signal levels, and the intensity of the aliasing portion could be non-trivial. It is far safer to record the signal using higher sampling frequency, and later low-pass the signal using more deliberate methods, e.g. including direct FFT on a suitable sliding window, throwing away higher coefficients, and reverse FFT, or a properly designed digital filter.

Naturally, practical implementations do work close to what Nyquist–Shannon + Whittaker–Shannon would yield even when the sampling frequency is lower than actually required, and the reconstructive interpolation is approximate. But how close?

For some types of signals it is close enough to be indistinguishable to an average ear from the original signal. For other types of signals, and to hearing systems with significant deviations from the average, it is not close enough.

Dear Mark,

What do you think about these two tests:

https://abx.digitalfeed.net/

https://www.npr.org/sections/therecord/2015/06/02/411473508/how-well-can-you-hear-audio-quality

?

I’ll take a look when I have a moment. I did write a blog on the SPR test. I’ll have to search for it.

Can someone tell me how the name of the software on that pictures is?

I use the Adobe program Audition to create the spectra and other plots.