The Other Side of The Nyquist/Shannon Coin

A couple of months ago, I was sitting with my youngest son in Mexico City and we started talking about digital audio. Michael received his graduate degree from MIT (I just happen to be wearing my MIT T-Shirt today) and was finishing his year as a Fulbright Scholar there. About a month ago, he moved to Zurich, Switzerland to start his first full time job (he’s a thoroughly international guy…and will now have to master another language). Our conversation steered towards the Nyquist/Shannon Theorem as we discussed how and why digital audio works.

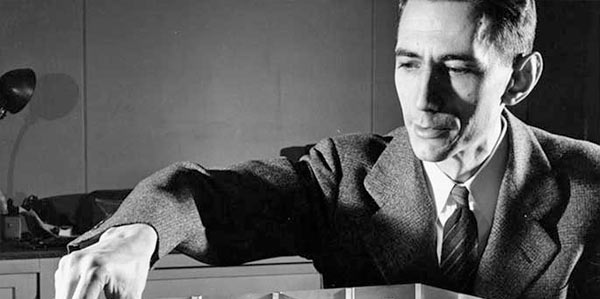

As often happens, he pulled out his Smartphone and looked up Claude Shannon on Wikipedia. I admit that I hadn’t investigated this amazing individual previously. But after hearing a few facts from the web article, I was astounded at the importance of his contributions to information theory…and where he grew up. Claude Shannon changed the course of the 20th century with his Masters Thesis and subsequent papers on “information theory”.

I’m from Michigan. I was born in Detroit and spend my youth in the nearby suburbs. During high school, my friends and I would head north to ski country almost every weekend. And the route took us up I-75 to the exit at Gaylord, Michigan…the city where Claude Shannon and his father and mother lived. He was born about 20 miles north of there in a beautiful little town called Petoskey, another connection to my skiing past (Boyne Highlands resort required you to pass through Petoskey). I was very pleasantly surprised.

Shannon is known as the “the father of information theory” after he published a landmark paper in 1948. As a 21-year old graduate student at the Massachusetts Institute of Technology (MIT), he wrote his thesis demonstrating that electrical applications of Boolean algebra could construct any logical, numerical relationship. The essence of his remarkable insight is that Boolean algebra could be realized through electrical applications of relays and switching circuits. This was the foundation of both the digital computer and digital circuit design theory.

The digital world in all of its incarnations owes a tremendous debt of gratitude to Claude Shannon. In reality, much of the high-tech gadgetry that we enjoy today stems from his work. A paper extracted from his thesis, “A Symbolic Analysis of Relay and Switching Circuits” earned Shannon the Alfred Noble American Institute of American Engineers Award in 1939. Howard Gardner called Shannon’s thesis “possibly the most important, and also the most famous, master’s thesis of the century.”

Not bad for a young man from remote North Country of Michigan.

The Nyquist/Shannon Theorem exists at the center of sampling theory and is a frequent topic in the great analog vs. digital debate in high-end audio conversations. Here’s the opening of the article on the theorem, “In the field of digital signal processing, the sampling theorem is a fundamental bridge between continuous-time signals (often called ‘analog signals’) and discrete-time signals (often called ‘digital signals’). It establishes a sufficient condition for a sample rate that permits a discrete sequence of samples to capture all the information from a continuous-time signal of finite bandwidth.

“The theorem also leads to a formula for perfectly reconstructing the original continuous-time function from the samples.”

More coming…

Hello Mark

A hearing test might need to be a prerequisite for engaging in any critical listening sessions in order to set a base line for accepting one’s professional opinion. Two years ago I had my ears tested in the sound proof booth at work, only to find diminished high frequency response in my left year. I wonder if this variable leads to some of the argument over the merits of Hi-res recordings.

Robert Buckner

I haven’t really addressed human hearing in depth…but it’s clearly one of the most critical things in the entire process.

“to capture all the information from a continuous-time signal of finite bandwidth”

The key part of that is the finite bandwidth – we can capture ALL of the information in the BANDWIDTH LIMITED signal. If there’s something that we can’t capture then it’s out of the bandwidth of the sampling system. It’s pretty damn cool! Shannon was a genuine genius to my way of thinking. It’s also worth noting that with discrete time signals it is undefined between the sampling points and that doesn’t matter – we still get all of the information we need to perfectly reconstruct the continuous time signal within the band limits that we set.

Exactly…enter low pass filters etc. The Nyquist/Shannon Theorem only applies if you can guarantee that frequencies higher than half the sample rate don’t get into the ADC.

Great stuff Mark. This kind of background info to digital audio would make a fantastic chapter in the book maybe. just downloaded his paper ” A Symbolic Analysis of Relay and Switching Circuits” and will see how much I can understand! Thanks again.

Bob.

I haven’t tried to read the paper but thanks for letting me know it’s available.

Problem is a sound has something like timing resolution, and Shannon here is of no help.

Ones here often write of JRiver, although jetAudio is better in that it has got so-called BBE/BBEViva things allegedly forwarded on improving the audio the way it originally sounds. There are also Reverb settings. Now, the observation is that upsampled audio, in fact, begins to sound same as 44.1/16 with both BBE and Reverb on, albeit more natural.

Thanks Jay.